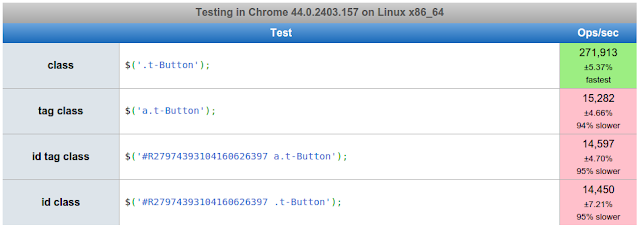

Note: This post has beeen updated to reflect me not looking very hard, but I added a performance comparison... just because.

Today I found one place where I really wanted to run a query to find references to potential data - application substitution strings.

|

| APEX Application Substitution Strings |

And yes, I have a CSS rule to right-align those names, to make it easier to read.

I couldn't find any references in the APEX dictionary (correction, see below), so I looked in the Oracle data dictionary to find where it may live.

select * from all_tab_columns where column_name like 'SUB%03';So not only are these stored in a place inaccessible to us mere-mortal developers, they are also stored in 20 sets of name/value columns - not rows.

This means if you have a dozen applications, with references in slightly different locations for each application, then string searches might be a pain.

Unpivot to the rescue!

select * from (

select id app_id, alias, name -- key facts

-- all the substitution stringz!

,substitution_string_01, substitution_value_01

,substitution_string_02, substitution_value_02

,substitution_string_03, substitution_value_03

,substitution_string_04, substitution_value_04

,substitution_string_05, substitution_value_05

,substitution_string_06, substitution_value_06

,substitution_string_07, substitution_value_07

,substitution_string_08, substitution_value_08

,substitution_string_09, substitution_value_09

,substitution_string_10, substitution_value_10

,substitution_string_11, substitution_value_11

,substitution_string_12, substitution_value_12

,substitution_string_13, substitution_value_13

,substitution_string_14, substitution_value_14

,substitution_string_15, substitution_value_15

,substitution_string_16, substitution_value_16

,substitution_string_17, substitution_value_17

,substitution_string_18, substitution_value_18

,substitution_string_19, substitution_value_19

,substitution_string_20, substitution_value_20

from apex_190200.WWV_FLOWS -- direct from underlying view

)

unpivot (

(str, val) -- new columns

for rec in -- denoted by

((substitution_string_01, substitution_value_01) as '01'

,(substitution_string_02, substitution_value_02) as '02'

,(substitution_string_03, substitution_value_03) as '03'

,(substitution_string_04, substitution_value_04) as '04'

,(substitution_string_05, substitution_value_05) as '05'

,(substitution_string_06, substitution_value_06) as '06'

,(substitution_string_07, substitution_value_07) as '07'

,(substitution_string_08, substitution_value_08) as '08'

,(substitution_string_09, substitution_value_09) as '09'

,(substitution_string_10, substitution_value_10) as '10'

,(substitution_string_11, substitution_value_11) as '11'

,(substitution_string_12, substitution_value_12) as '12'

,(substitution_string_13, substitution_value_13) as '13'

,(substitution_string_14, substitution_value_14) as '14'

,(substitution_string_15, substitution_value_15) as '15'

,(substitution_string_16, substitution_value_16) as '16'

,(substitution_string_17, substitution_value_17) as '17'

,(substitution_string_18, substitution_value_18) as '18'

,(substitution_string_19, substitution_value_19) as '19'

,(substitution_string_20, substitution_value_20) as '20'

)

)

order by app_id, str

This query transposes all the string/value columns into rows, each denonimated by the new "REC" column.Note, this query can only be executed by those with the APEX Administrator Role, and if you want to have it within a view that could be executed by other schemas, then select access on wwv_flows is needed with grant option.

|

| Columns unpivoted into rows |

By placing the query in a view, I can now make queries like this to find any substitution strings in any applications that have date references.

select * from apx_app_sub_strings where val like 'APP_DATE%';Pretty neat, well at least as far as what I was trying to do.

This query may break in future versions, as it's based on an underlying, undocumented view.

I also think that once upon a time, there were fewer pairs.

Update: As the community quickly pointed out, I missed & forgot about a view already dedicated for such a task. I was too busy looking for a column number, when really I should have used

APEX_APPLICATION_SUBSTITUTIONS.I thought I'd see how 'they' solved the problem, and I was a little surprised to see a bunch of

UNION statements.SELECT ...

f.substitution_string_18,

f.substitution_value_18,

f.substitution_string_19,

f.substitution_value_19,

f.substitution_string_20,

f.substitution_value_20

from wwv_flow_authorized auth,

wwv_flows f

where f.id = auth.application_id )

select workspace,

workspace_display_name,

application_id,

application_name,

substitution_string_01 as substitution_string,

substitution_value_01 as substitution_value

from substitution

where substitution_string_01 is not null

union all

select workspace,

workspace_display_name,

application_id,

application_name,

substitution_string_02 as substitution_string,

substitution_value_02 as substitution_value

from substitution

where substitution_string_02 is not null

union all...

After seeing this I couldn't help but run a brute for comparison, running both solutions x times.iterations:5000 16.55 secs (.003310 secs per iteration) -- union all 6.54 secs (.001308 secs per iteration) -- unpivotI made sure the underlying join was the same, and I'm not all that surprised the

unpivot did the job quicker.Update 2: It appears 20.1 has gone with unpivot.