My current project has involved rebuilding the entire aging stack for an environment that's currently 95% Forms, with any new work developed in Oracle APEX. The upgrade covers all layers - from OS, to DB, to browser, and to application builders - all heading to contemporary versions.

The general directive is like-for-like, so the applications should work the way they used to, if not a little better.

So to dumb down the process, we basically need to recompile everything on the new stack, fix the gaps, then test extensively.

***

It's been an interesting project to compare the type of effort required to uplift a large Forms application compared with a modest set of Oracle APEX applications. I do this with the awareness that it's like comparing apples and toaster ovens, but the outcomes have been interesting enough (to me, at least), that it's forming the basis of my next presentation.

In fairness, there wasn't too much in regard to general syntax used within Forms that needed addressing. Nothing has significantly changed in Forms functionality, and we just needed the app to function as it did previously.

There were a few environment level settings we found to help with some backwards compatibility, thereby saving some rewrite.

From an infrastructure perspective, however, there were a number of aspects we needed to tackle to keep up with the modernisation of the stack. This included the launcher (Applet->FSAL), the SSO mechanism, document (blob) handling, and guiding the application to a tidy close after a timeout occurred. Compared to Oracle APEX in a modern ecosystem, the sting of that last item will linger.

***

This application has a considerable amount of blob content stored in (now deprecated) OrdImage data types, so that one item took up a considerable portion of effort - from shifting the data; to modifying the UI to load and view the attachments.

Oracle APEX came to the rescue for this case, replacing an old mod_plsql interface that supplemented a Form, and an ancient looking webutil UI in another.

The saddest part of the Forms upgrade was the amount of time I needed to spend ensuring session timeout didn't get the user stuck in a dead session. Part of me kept thinking how much of that 95% we could have started to shift to APEX with that time...

***

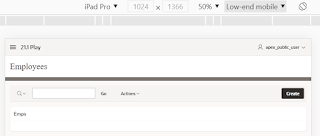

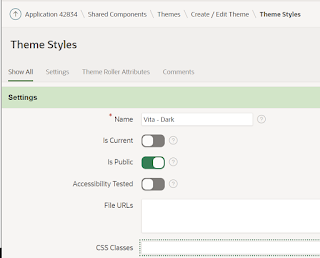

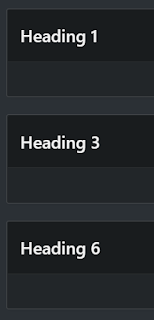

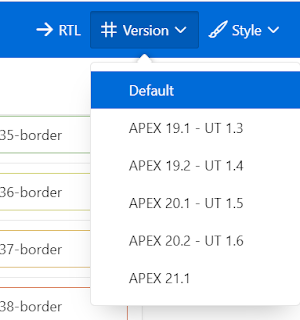

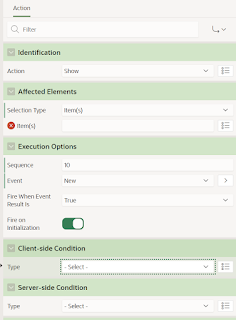

As for the APEX applications, we took advantage of the testing that was going to happen and updated the Universal Theme (UT) of the applications.

|

| Prompt to upgrade the APEX UT |

I'm working through the issues that came out of this for the presentation, but most of the issues we needed to remediate were due to the UT update - not the upgrade of the database and the Oracle APEX version. And even then, most of the issues are relatively benign UI differences which don't need attention. A good handful of which were on purpose, for the benefit of APEX users - just against the fine grain of "like for like".

We could have upgraded both the database and the APEX version, left the UT alone, and only had a few minor version related adjustments to take care of - then still have a nice new bag of tricks to explore.

This is a far cry from earlier days of APEX where a big hurdle to upgrades was contemplating the significate cost of migrating to a newer theme with improved considerations.

But again, this wasn't necessary. I recall Joel presenting a session where he took an APEX application built some time ago, spanning a gap of multiple APEX versions. He just imported the application, and it ran without change - even when APEX was still a comparative youngster.

This methodology and mindset hasn't changed. I've done a few APEX upgrades in my time, and my recollection of any issues were related to heavy customisation - rarely native usage. Any native changes were always well documented, with a guide to transition to what is an improved outcome.

***

So today I share this heavily abbreviated and filtered anecdote of my recent explorations with Oracle technology, and once again remember Joel as a guiding force to help transform Oracle APEX into a development tool that has longevity without heavy maintenance, while still making the developer's life easier each release.

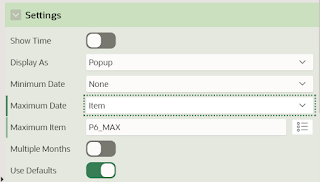

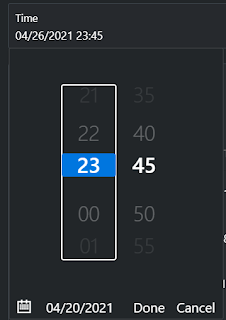

And also a feature haven for those Forms developers out there.

I can even draft next year's post with another small side story from this upgrade project, reaching deeper into the past of Oracle APEX.